WhyLabs AI Control Center

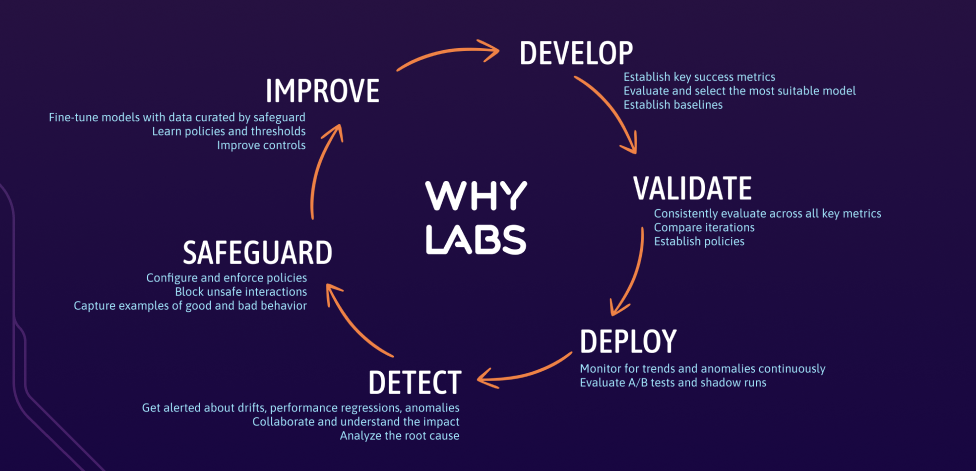

In the era of generative AI, the WhyLabs AI Control Center keeps AI applications secure as observability alone becomes insufficient. Now, teams can go beyond alerting on observed changes with real-time prevention, controlling for vulnerabilities and potential risks.

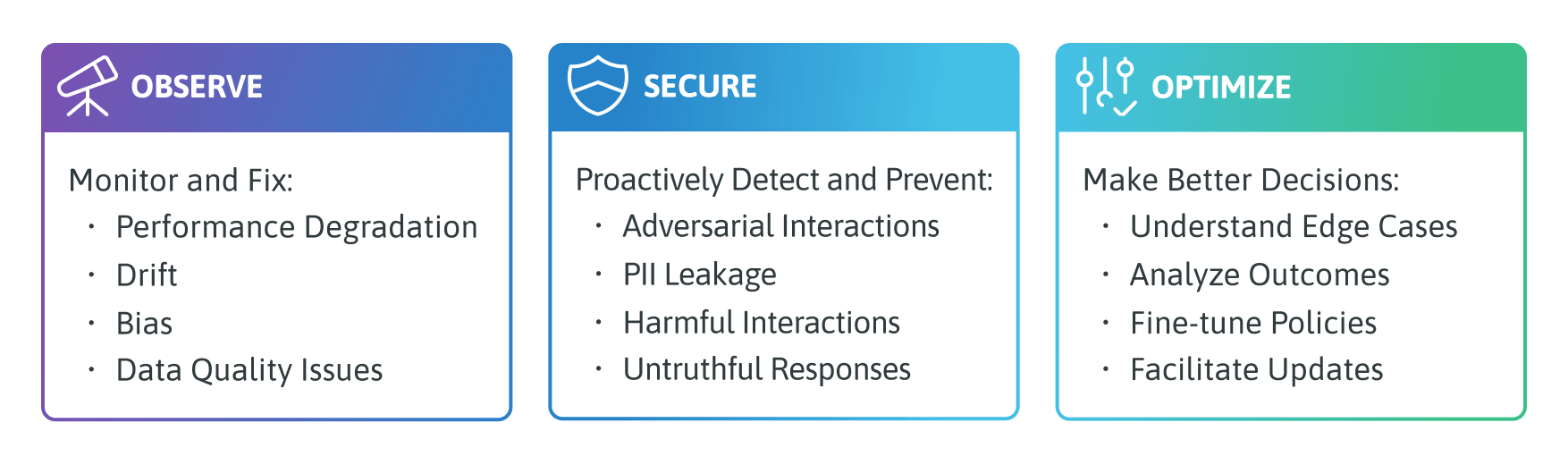

The AI Control Center provides features that are aligned with three groups of capabilities: Observe, Secure, and Optimize

Details about each capability can be found in their respective sections:

Observe

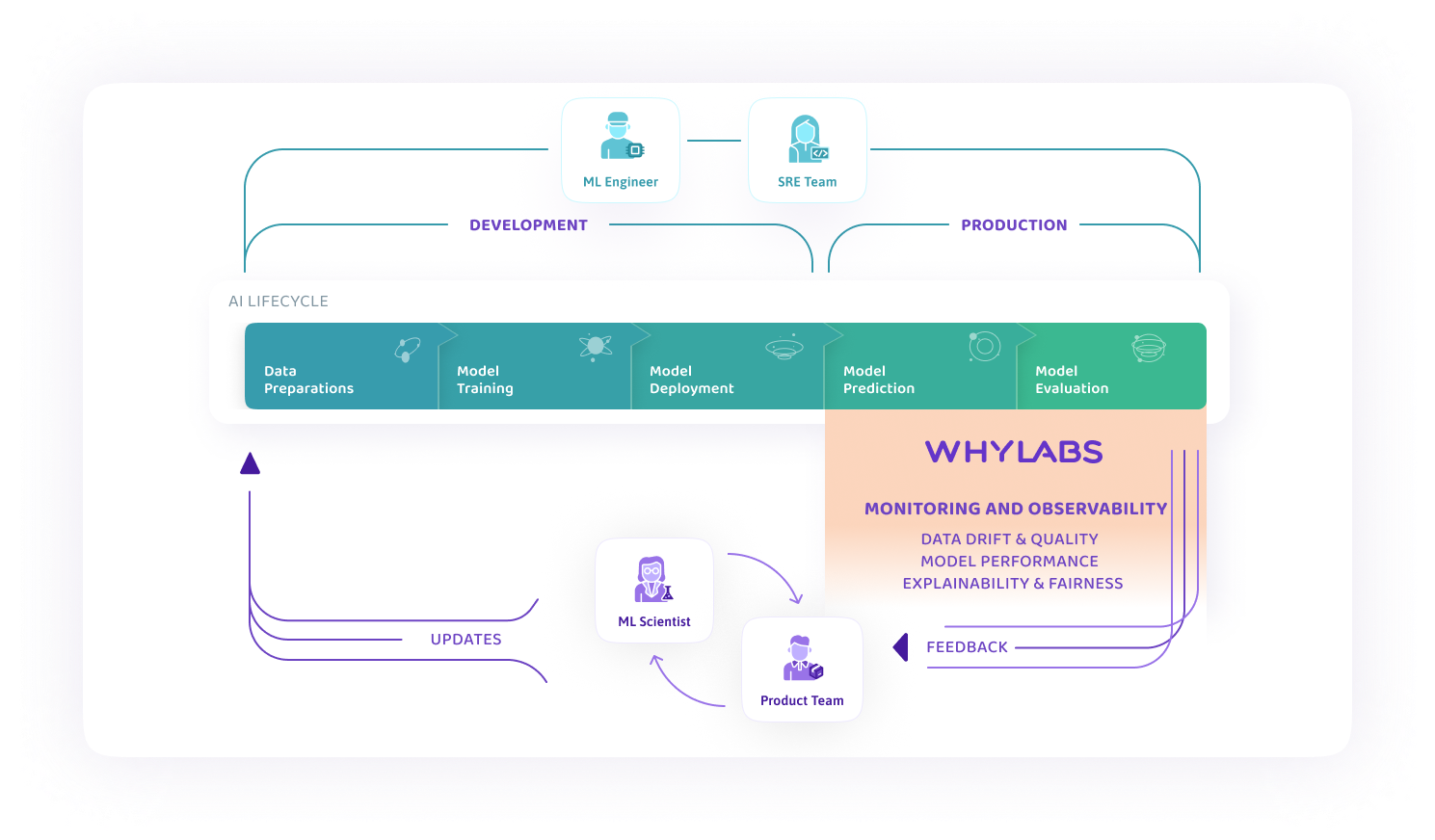

Regardless of the use case—batch inference models, live prediction services, or generative AI applications—Observe provides necessary observability and monitoring tools to operate them responsibly.

Infrastructure agnostic, real-time telemetry—with whylogs and LangKit open source libraries—are employed to enable anomaly detection and monitoring for drift, data quality issues, and performance degradation. With WhyLabs, teams have decreased time-to-resolution of AI issues by 10x.

Observe provides easy-to-use observability and monitoring tools for AI applications:

- Massively scalable and privacy preserving telemetry; work with 100% of the data, no sampling required

- Automatic model onboarding

- Smart monitor presets, with zero-config set-up workflows or enabled with a single click

- Templated configurations for notifications and alerts to drive standards across teams

- Debugging workflows for detecting data drift and concept drift

- Data cohorts to help identify problematic segments that might indicate model bias or data quality issues

- Fully customizable dashboards to visualize the health of all your AI applications in one location

WhyLabs AI Control Center offers a unique architecture that allows customers to switch on observability for 100% of the data, never sampling because sampling distorts the distributions and causes high rate of false alarms. Customers configure monitoring by connecting WhyLabs directly to training and inference data, in batch or real time.

This integration is cost effective and privacy-preserving, making it the best choice in high inference volume AI applications for organizations such as Healthcare and FinTech.

Secure

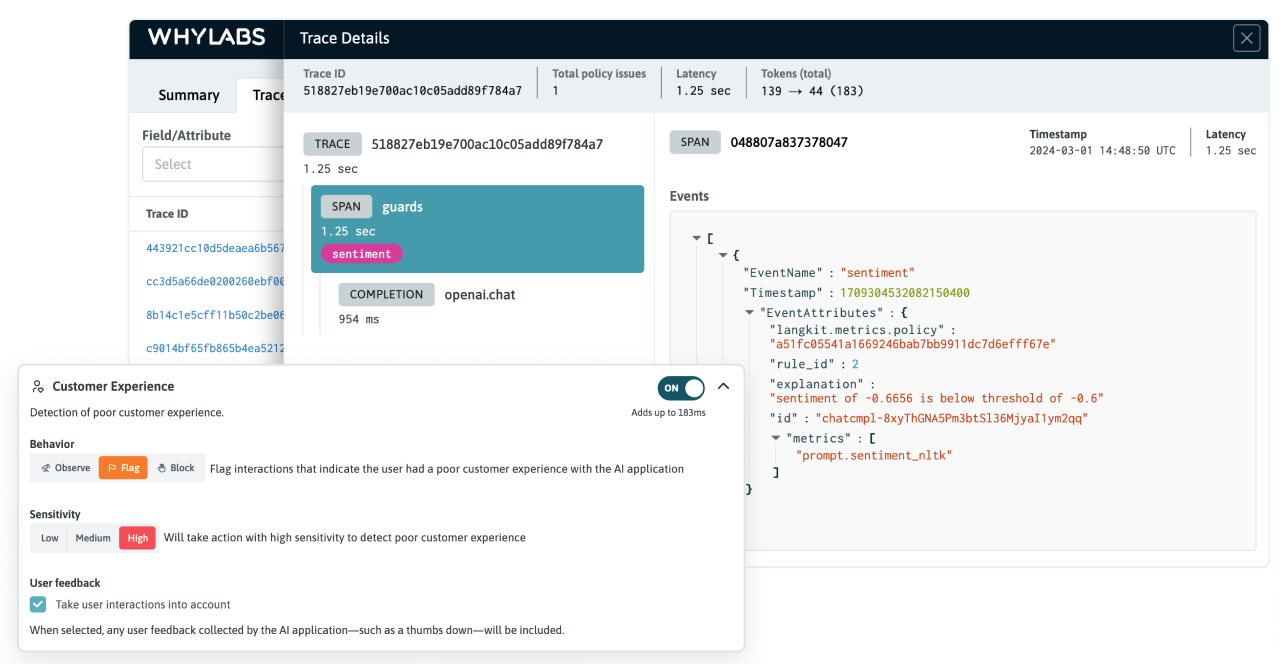

Passive observability tools alone are not sufficient for Generative AI applications, given the new classes of vulnerabilities that can negatively impact the performance of theses applications. WhyLabs Secure provides best-in-class tools to safeguard against risks and vulnerabilities in an easy-to-use interface.

Configure your LLM policy to protect against bad actors, abuse, and misuse. Ensure controls are in place to ensure interactions are compliant and result in a good customer experience. Detect potential hallucinations and check for relevancy with a unique solution that combines the power of a real-time guardrail with application tracing. WhyLabs Secure ensures the ultimate visibility and control around any guardrail decision, giving AI developers and stakeholders peace of mind.

Curated policy rulesets enable the most advanced threat detectors in minutes to be configured in a few clicks. Beyond that, users can customize threat detectors and continuously tune them on new examples, as well as customize system messages that steer the application toward safe behavior.

Optimize

WhyLabs Optimize creates feedback loops which help ML teams continuously optimize and control predictive ML and generative AI models in production.

The platform provides feedback tools for improving any aspect of your AI system. Observability and security generate insights necessary to improve the application experience. Using these insights, it's possible to create datasets for re-training predictive models or for setting up Reinforcement Learning from Human Feedback (RLHF) in generative models.

The WhyLabs approach to AI observability and monitoring is based on cutting edge research where flexibility, privacy, and scalability were prioritized. As a result, the platform provides many customizable options to enable use-case-specific implementations that can boost the feedback loop.